What’s 🔥 in Enterprise IT/VC #491

Why AI is not killing the cybersecurity industry, but expanding it exponentially - thoughts from RSA

Cybersecurity was front and center this week not only because of RSA, cybersecurity’s Super Bowl, but also because of this bombshell article from Fortune about Claude Mythos and the LiteLLM hack.

Let’s start with Anthropic

IMO, the market oversells and then corrects.

I actually think when you dig in the opportunity is even bigger.

Here’s the math that keeps cybersecurity investors up at night (in a good way). Every new model release expands the market from both directions simultaneously. On one side, the attack surface: more agents writing more code, more APIs, more autonomous workloads spinning up infrastructure no human reviewed.

On the other side, the attackers: AI-powered exploitation has compressed breakout times from 48 minutes to 27 seconds, zero-day development from 40 days to effectively minus-1 day, and social engineering now scales infinitely. Claude Mythos leaking the same week as RSA with Anthropic themselves calling it an “unprecedented cybersecurity risk” is the proof point. AI is not killing the cybersecurity industry. It’s expanding it exponentially. Every model release is the gift that keeps on giving to cybersecurity.

Now here’s a real-world example that captures this perfectly. LiteLLM is basically the standard adapter that almost every modern AI/agent stack uses to call LLMs (OpenAI, Anthropic, Grok, Gemini, etc.).

If you dig into the Snyk post above, AI is the attacker. The threat actor, TeamPCP, used a component called hackerbot-claw. This tool leverages an AI agent (specifically openclaw) to automate attack targeting. This is noted by researchers as one of the first documented cases of an AI agent being used operationally in a supply chain attack.

But a human actually caught it, not AI! Despite the high-tech nature of the attack, it wasn’t a “security bot” that first raised the alarm. It was caught by a human developer, Callum McMahon at FutureSearch. Callum caught the LiteLLM compromise because the malicious payload caused a physical system crash (fork bomb) that bypassed automated scanners, which had been fooled by legitimate maintainer credentials and valid pip hashes.

The LiteLLM attack is the perfect case study for why a layered approach matters. LLMs alone aren’t enough for defense. Foundation models are stochastic by nature. They can find 500 vulnerabilities in a codebase, but were any of them real? Were they submitted? Were they prioritized? CISOs don’t just want probabilistic scanning. They want determinism. They want to know that when a vulnerability is flagged, it’s provably there and provably fixed.

The enterprises I spoke with at RSA are converging on a layered approach: LLM-powered discovery to cast a wide net across massive codebases at speed, combined with deterministic verification to confirm, prioritize, and remediate with certainty. Neither layer works alone. Stochastic scanning without determinism gives you noise. Determinism without AI-powered discovery gives you the same slow, manual process that can’t keep pace with agents writing code at machine speed. And as LiteLLM showed, add humans to the mix because these hackers are creating sophisticated exploits that still require human oversight and judgment.

The companies that nail this layered architecture - combining the breadth of AI with the precision of deterministic analysis and the judgment of humans - will own the next generation of application security.

Here are other things that stood out top of mind from RSA 2026:

Top thoughts from RSA

Agents, agents, agents. The biggest concern from CISOs I spoke with was agent identity and permissions - how to enable agents to have their own identity, how long-lived, how finely scoped. It’s all about goal-seeking behavior - agents pulling in whatever tool is necessary to realize a goal and doing it relentlessly. That blast radius scares the living daylights out of CISOs.

Alert and permission fatigue. With giving agents fine-grained policy and control, human escalation still matters but will security practitioners or line of business owners drown in permission fatigue having to approve every new thing? So many different approaches to this at every org.

Humans still matter. CISOs and founders were networking in full force. This is how deals still get done at RSA. Trust matters.

LLMs alone aren’t enough for defense. One F500 CISO said what Anthropic showed them blew them away, but some of his team told me that while super cool to find 500 vulnerabilities, were any of them real and submitted? The foundational model tech is real but there’s still a long gap to help security teams not only find them but also fix them and collaborate on them. There’s room to bring a multilayer approach to AppSec as CISOs want determinism in addition to stochastic scanning.

Every security company is going agentic. There was no founder I spoke with or who spoke on panels that didn’t discuss how important becoming a full agentic company was. Efficiency, speed, eating their own dog food. That said, a number of CISOs pointed out that as more security startups become agent-first, how do they know the code they’re generating is really secure when their engineers aren’t reviewing it with a fine-tooth comb? We may have some security issues next year as every security vendor becomes agent-first. The irony would be brutal.

Nation states. Banks, military, utilities all discussed how activity has spiked in a massive way.

Social engineering massively on the rise. Proofpoint’s CEO said they used to see 3-4 super sophisticated social engineering campaigns a day and now see a dozen per day. One company that stood out at the RSA Innovation Sandbox for top next-gen startups was Humanix (yes, I’m on the board 😄) which aims to help solve this problem with a human threat detection and response platform. Stop blaming humans with endless training and instead empower security teams.

Breakout times are collapsing. AI-powered exploitation has compressed average breakout time from 48 minutes to 29 minutes, with the fastest recorded at 27 seconds for full automation. Attackers can now reason through unfamiliar environments instead of relying on manual expertise. When offense moves in seconds, defense can’t move in hours.

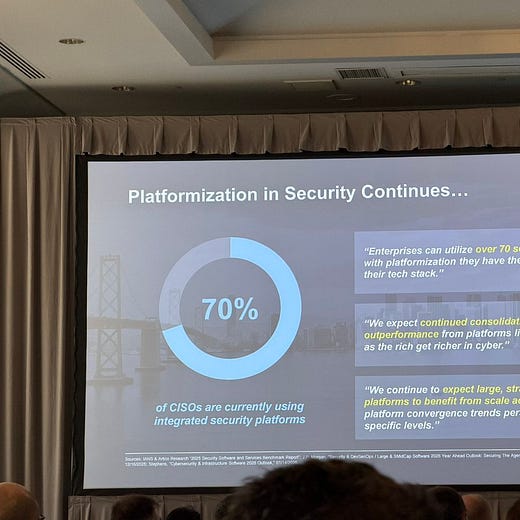

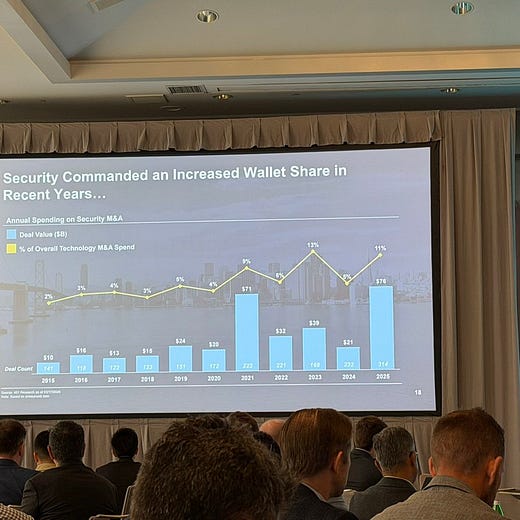

Platform consolidation is accelerating, but the M&A sweet spot is below $1B. Palo Alto went from 4 products to 75 in 7.5 years, compressing operating margins from 20%+ down to 7% to fund it. Only 4 acquirers have $40B+ market cap in cybersecurity. Average security company trades at 5-7x revenue. For founders: build something great in a category adjacent to a platform player and the exit path is clear. But as Nikesh put it, you stop building product, you die.

Supply Chain Risk Has a New AI Vector: There’s a dangerous new supply chain attack surface emerging: compromised AI skills and plugins. Rapid adoption of third-party AI tools is creating unvetted entry points across the enterprise. Prompt-based malware generation and PowerShell script exfiltration can now happen without any external components. The largest financial institutions are already requiring vendors to show detailed compromise scenarios and safeguards before onboarding. For startups selling to enterprises: expect rigorous threat modeling as table stakes.

The CISO Role Is Flipping from Gatekeeper to Enabler: The winning CISOs are positioning themselves as people who “make AI better,” not blockers. The shift is from “sanctioned only” to enablement with guardrails. New product categories like AI Control Towers are emerging for agent discovery and management. The metric that matters internally: high AI usage velocity + high governance percentage. For founders: frame your pitch around enabling more AI adoption, not just preventing bad outcomes.

Cyber teams are shrinking and the KPIs are changing. Multiple founders on the JPM panels said security teams will get significantly smaller over the next 5 years while delivering better outcomes. The metric to watch: what percentage of your workforce is doing 80% of the job vs. managing automation doing 80% of the job. Revenue-to-headcount ratios are the new KPI. If you’re building in security, sell efficiency and automation, not headcount expansion.

Finally, the quote of the week came from friend Maor Friedman from F2 Capital. As we were running around from event to event, he looked at me and said, “Ed, you know who we are right? We’re forward deployed VCs.” Yes, that about sums up the week!

As always, 🙏🏼 for reading and please share with your friends and colleagues!

Scaling Startups

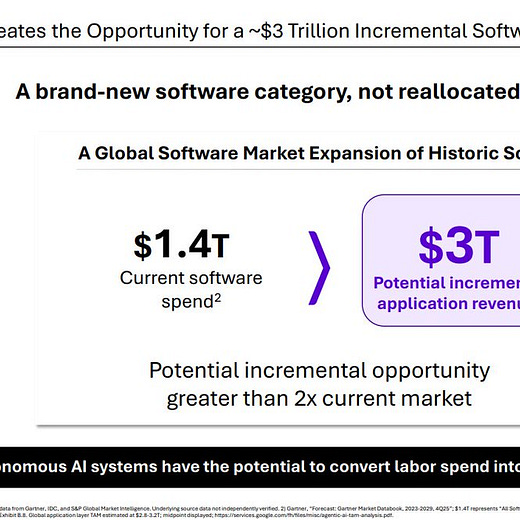

#👇🏼 spot on advice for later stage cos from David George at a16z: There are only two paths left for software

Path one: accelerate growth off new AI products

Accelerating growth with new AI products does not mean bolting on chatbots or copilot interfaces, attached to the old SKU list.

It means new products that can move the company’s total growth rate by 10 points within 12 months. And, just as importantly, it means you need to speedrun rebuilding your company - including your executive team - so that if you do find product market fit, you will actually capitalize on the opportunity.

#🙏🏻 to be on this shortlist of inception investors…

#so many 💎 in here from Farhan VP Eng Shopify on getting your org wired with agents but one of 🔑 is think before you just prompt!

#🤔

#what’s happening at YC

Enterprise Tech

#the age old debate - everyone has their own vertical model now starting with Cursor and now Intercom and Decagon and …is the juice worth the squeeze? The fundamental debate is with all of that data does it make sense to take an open source model and have a team to build your own or will the latest big model release from the top LLM builders just destroy any gains that fine tuning. In Intercom’s case, it now uses Apex 1.0, a model they fine-tuned (post-trained) from an unnamed open-weights base mode - team of 60 built for a year…

Clem from Hugging Face weighs in

Finally Rahul Goyal, head of applied AI at Ramp, argues that rapid AI advancements like larger context windows and cheaper tokens will commoditize low-level optimizations, rendering efforts in context reduction, retrieval tweaks, or custom multi-agent systems obsolete for startups. Human-centric areas are more durable such as intuitive product design, seamless integrations with existing tools, and user training to leverage AI effectively in real workflows.

#why open source matters - NemoClaw, hardened version of OpenClaw from Nvidia, running Qwen locally and sandboxed!

#this is the future of software development, self maintaining software, reminds me a lot of what OpenClaw already does to itself…

#Claude down again - for over 5 hours and also imposed rate limits with no warning…we just don’t have enough compute!

#turns out you still need people for that pesky last mile in the enterprise 🤷🏻♂️

#🤯

#💯

#just the beginning of AI efficiency gains, frankly most of the layoffs from companies have blamed AI, wait till the AI really is implemented like this 👇🏻

Markets

#Walmart hits record highs while Saks goes bankrupt, AI is accelerating the brutal K-shaped split…but who actually wins?

#smart or desperate to catch up to Anthropic?

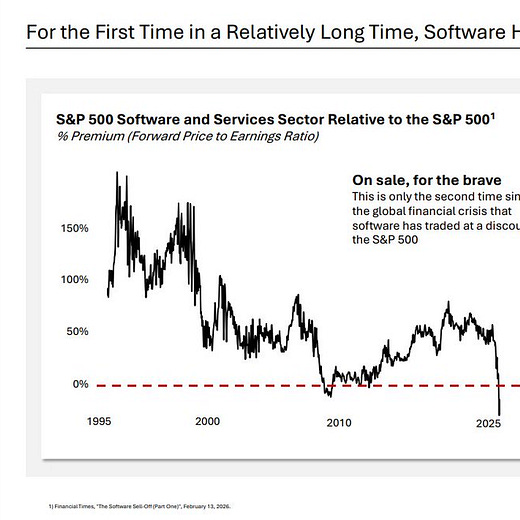

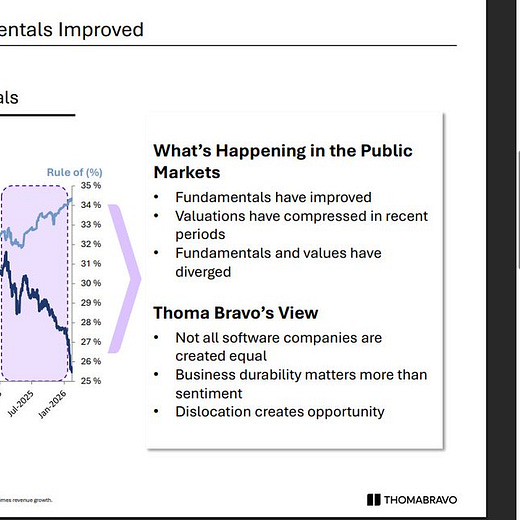

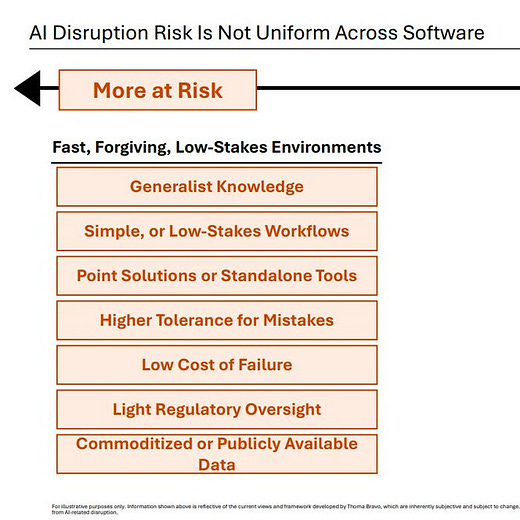

#speaking of PE, Thoma Bravo sharing its world view - sure its cheaper than before while fundamentals have improved but terminal value is so much less certain due to AI disruption

from Managing Partner Holden Spaht on his LI post:

AI disruption is real and profound, but not all software is equally exposed to the downside risk. Companies with generalist knowledge domains, simplified workflows, light regulatory oversight and limited switching costs are indeed more vulnerable. These types of firms don’t match our investment thesis, and we believe they have no place in our portfolio.

#AI is disrupting hedge funds as well…

"ai is not killing cybersecurity it's expanding it exponentially" - every new model release expands the attack surface and the defense market simultaneously. the gift that keeps giving