What’s 🔥 in Enterprise IT/VC #478

Beyond Models: The Execution Intelligence Layer for Agents

Merry Christmas 🎄 and Happy Holidays.

This time of year tends to bring long retrospectives. But a few essays this past week stood out for a different reason: they put real historical context around where we actually are in the AI curve and where things are heading next.

Three in particular caught my attention: Ivan Zhao (Notion - Steam, Steel and Infinite Minds), Aaron Levie (Box - Jevon’s Paradox for Knowledge Work), and Jaya Gupta (Foundation Capital - AI’s Trillion dollar Opportunity: Context Graphs). From very different vantage points, they all converge on the same core idea: we are not just layering AI onto existing software. We are rebuilding how work, decisions, and enterprise systems function at their core.

A few shared themes emerged:

1/ This shift is structural, not incremental.

Enterprise software is being re-architected around AI-native systems, not upgraded SaaS.

2/ Context matters more than raw data.

The future isn’t just storing files or tickets, but understanding why decisions happen.

3/ Agents only become powerful inside rich context.

Workflows, intent, history, permissions, and outcomes are what turn agents from demos into systems.

4/ We’ve seen this movie 🎥 before.

Every major platform shift looks primitive until the right abstraction layer emerges. That’s when value creation accelerates.

5/ This is not a one-year hype cycle.

It’s a multi-year rebuild of how work gets done, who does it, and what enterprise software even means.

That brings me to the theme I’ve been thinking most deeply about over the past year: context. When we talk about context, we’re not talking about metadata or better prompts. We’re talking about decision-time understanding. Context is the combination of inputs, intent, constraints, history, permissions, exceptions, and outcomes that surround every real enterprise decision. It’s the difference between knowing what happened and knowing why it happened.

Most enterprise systems were built to store records. They were never designed to capture decision logic as it unfolds. That gap is now becoming the bottleneck for AI adoption.

This idea isn’t entirely new. We can look to the software development world for an early signal. Spec-driven development has emerged as one of the fastest-growing areas in AI, built around a simple insight: agents need explicit constraints to stay on rails.

In that world, specs become living documents. They encode intent, boundaries, and expected behavior, and they continuously evolve as agents act and learn.

What’s different now is scope.

Decision intelligence takes this idea beyond code and applies it to every enterprise workflow where agents interpret fuzzy human intent, make tradeoffs, and act in the real world. What’s emerging is a broader, more powerful abstraction: the Execution Intelligence Layer.

The execution intelligence layer sits between intent and infrastructure. It is where context is evaluated, decisions are made, actions are orchestrated, and outcomes are captured. It turns understanding into motion.

I wrote more about this in What’s 🔥 #464:

Specs are that policy layer in action. They don’t just apply to code, but can apply to every workflow where agents interpret fuzzy human intent and act on it. Once in place, specs become living documents that turn text into agentic action.

Aaron lays out the opportunity ahead and why context is bigger than we thought.

Jaya Gupta takes this one step further by naming the missing artifact. Agents don’t just need rules. They need access to decision traces that show how rules were applied in the past, where exceptions were granted, how conflicts were resolved, who approved what, and which precedents actually govern reality.

Agents don’t just need rules. They need access to the decision traces that show how rules were applied in the past, where exceptions were granted, how conflicts were resolved, who approved what, and which precedents actually govern reality.

This is where systems of agents startups have a structural advantage. They sit in the execution path. They see the full context at decision time: what inputs were gathered across systems, what policy was evaluated, what exception route was invoked, who approved, and what state was written. If you persist those traces, you get something that doesn’t exist in most enterprises today: a queryable record of how decisions were made.

and the kicker is this - why startups can win

Systems of agents startups have a structural advantage: they’re in the orchestration path.

When an agent triages an escalation, responds to an incident, or decides on a discount, it pulls context from multiple systems, evaluates rules, resolves conflicts, and acts. The orchestration layer sees the full picture: what inputs were gathered, what policies applied, what exceptions were granted, and why. Because it’s executing the workflow, it can capture that context at decision time – not after the fact via ETL, but in the moment, as a first-class record.

Together, these ideas point to the same conclusion: models don’t create leverage on their own. The execution intelligence layer does.

I’m seeing this across the board in a number of portfolio companies. As Aaron Levie put it, there is a much thicker layer above the LLM than was initially perceived.

The moat isn’t the query-response interaction. It gets much stronger when workflows are automated, when humans are pulled into decision-making, when exceptions are handled, and when systems learn from those outcomes to codify better behavior over time.

The last mile in the enterprise is always the longst. That’s exactly where the opportunity lies in 2026. As agent systems evolve into decision, execution, and learning engines, the value unlocked inside the enterprise will be massive. There is still a lot to build 🏗️ and fix to get there.

As always, 🙏🏼 for reading and please share with your friends and colleagues!

Scaling Startups

#the mindset of a winning founder

#solid advice

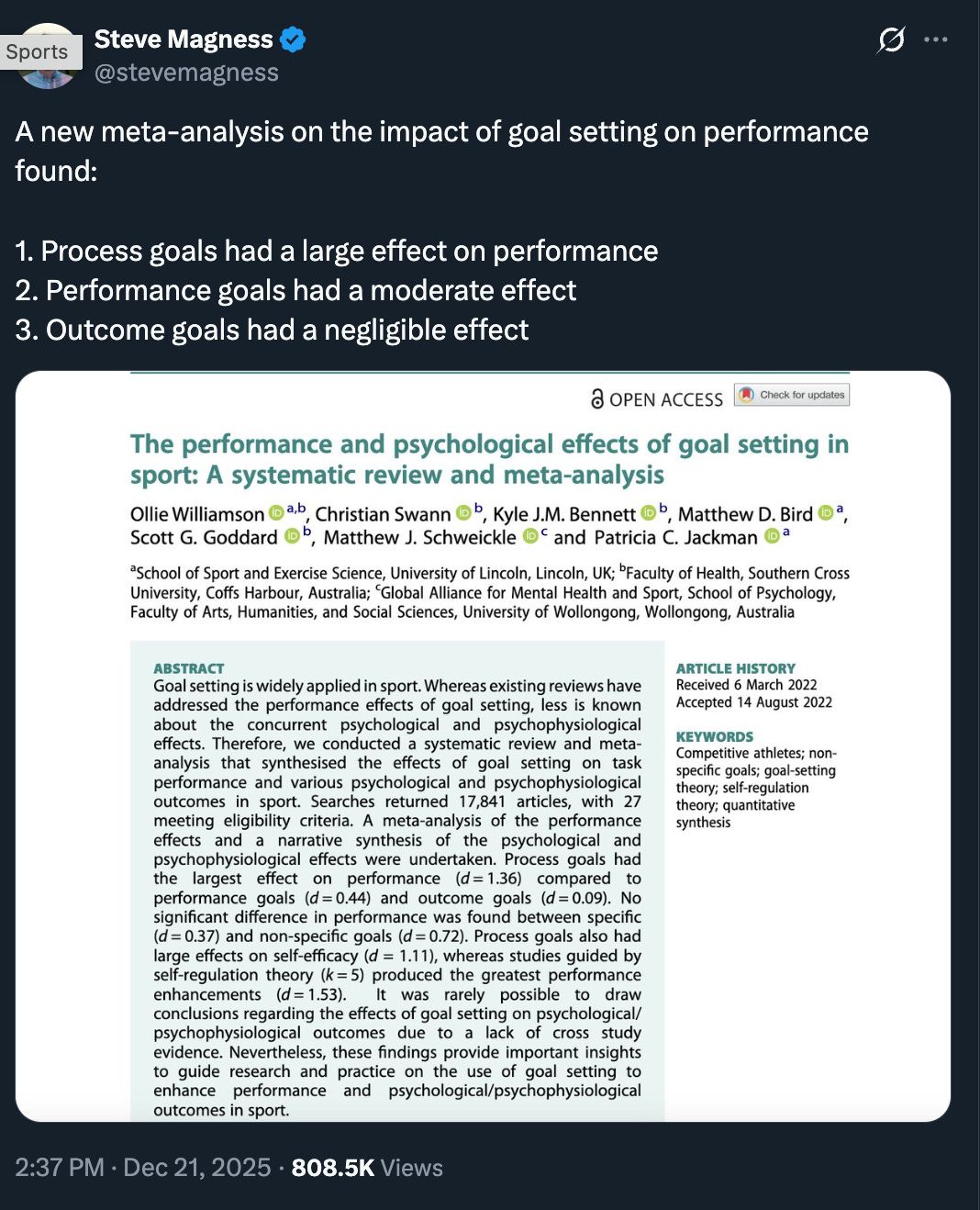

#🎯 focus on the goal of showing up day to day, embrace the grind

#

Enterprise Tech

#🤯 Nvidia announced a $20B Inference Technology Licensing Agreement with Groq on Christmas Eve. Groq last raised $750M at a. $6.9B valuation just a few months ago and is now “selling” for $20B. Here’s one of the better takes amongst the many on this deal

#more M&A rumors and this will continue into 2026 in a big way as market for observability, data infra, and security logging continue to merge - in world of AI just need it all in one place to derive proactive intelligence - following Palo Alto Networks acquisition of Chronosphere for $3.35B - who will Datadog buy to expand their security operations?

#social giveth and social taketh away - how not to respond from customer feedback - Coderabbit CEO - the mob can be brutal

#security like authorization and authentication at scale also desperately needed for this world (check out Keycard, one of my port cos)

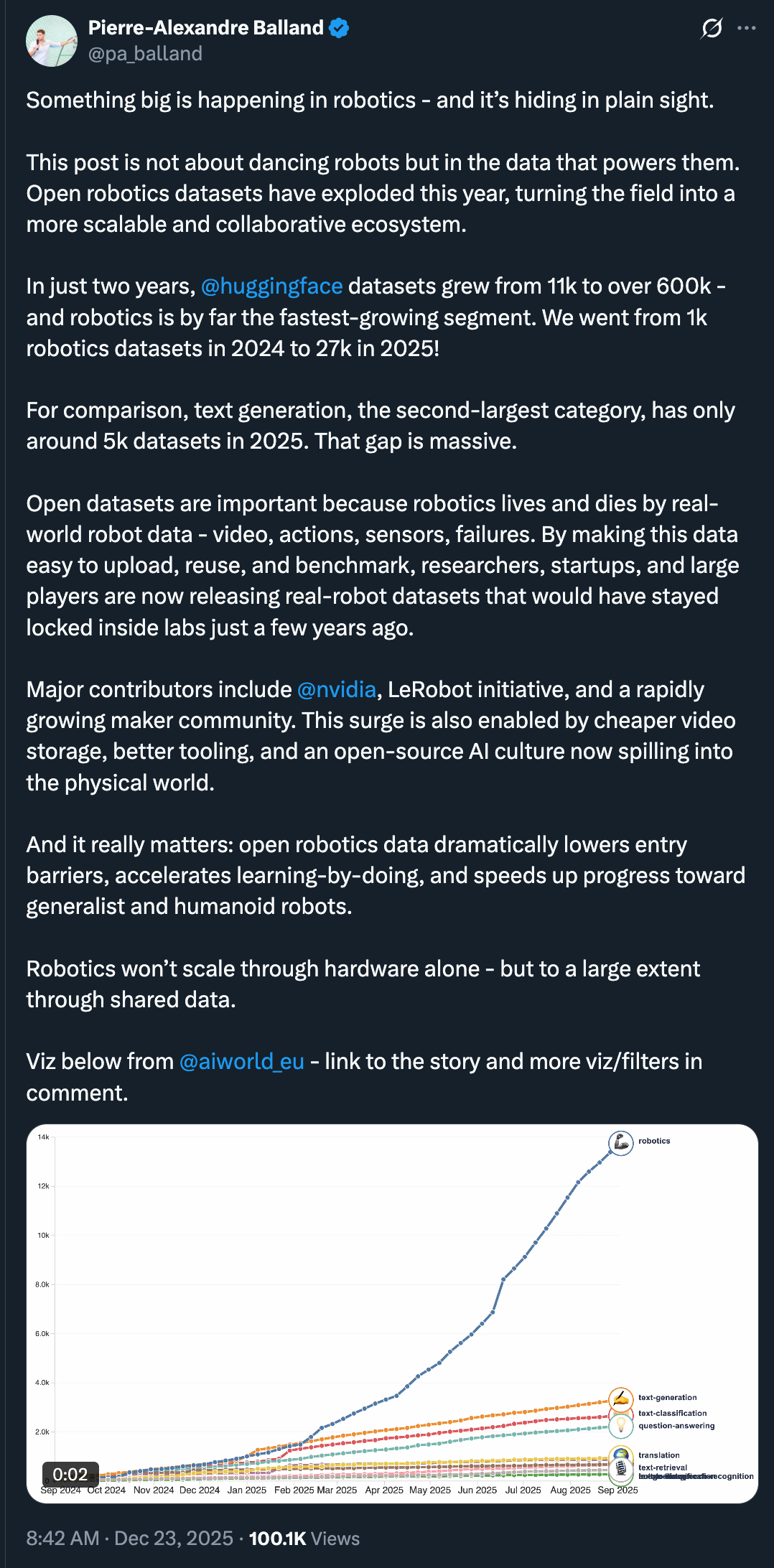

#one of biggest breakthrough in robotics will be open data sets

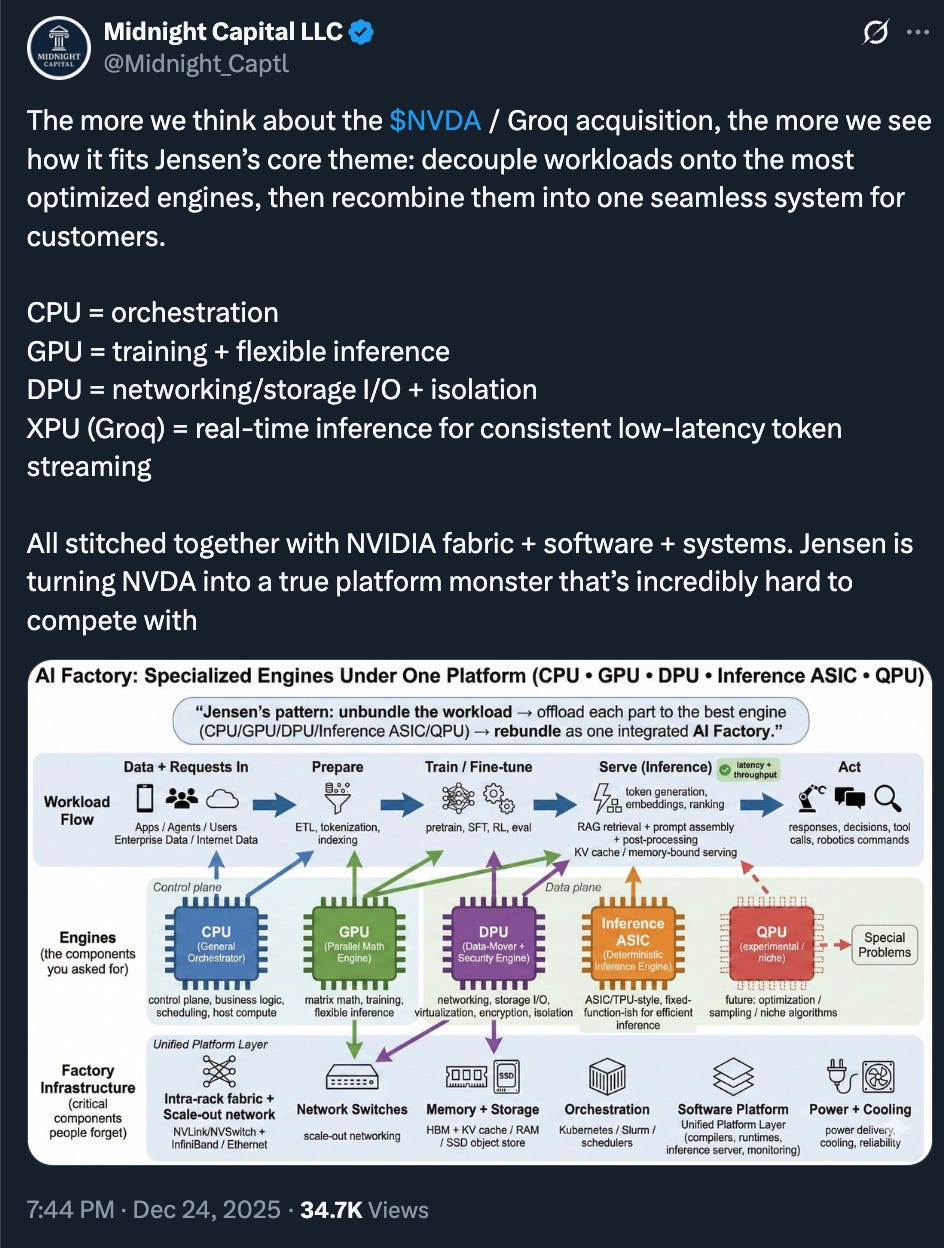

#Nvidia platform

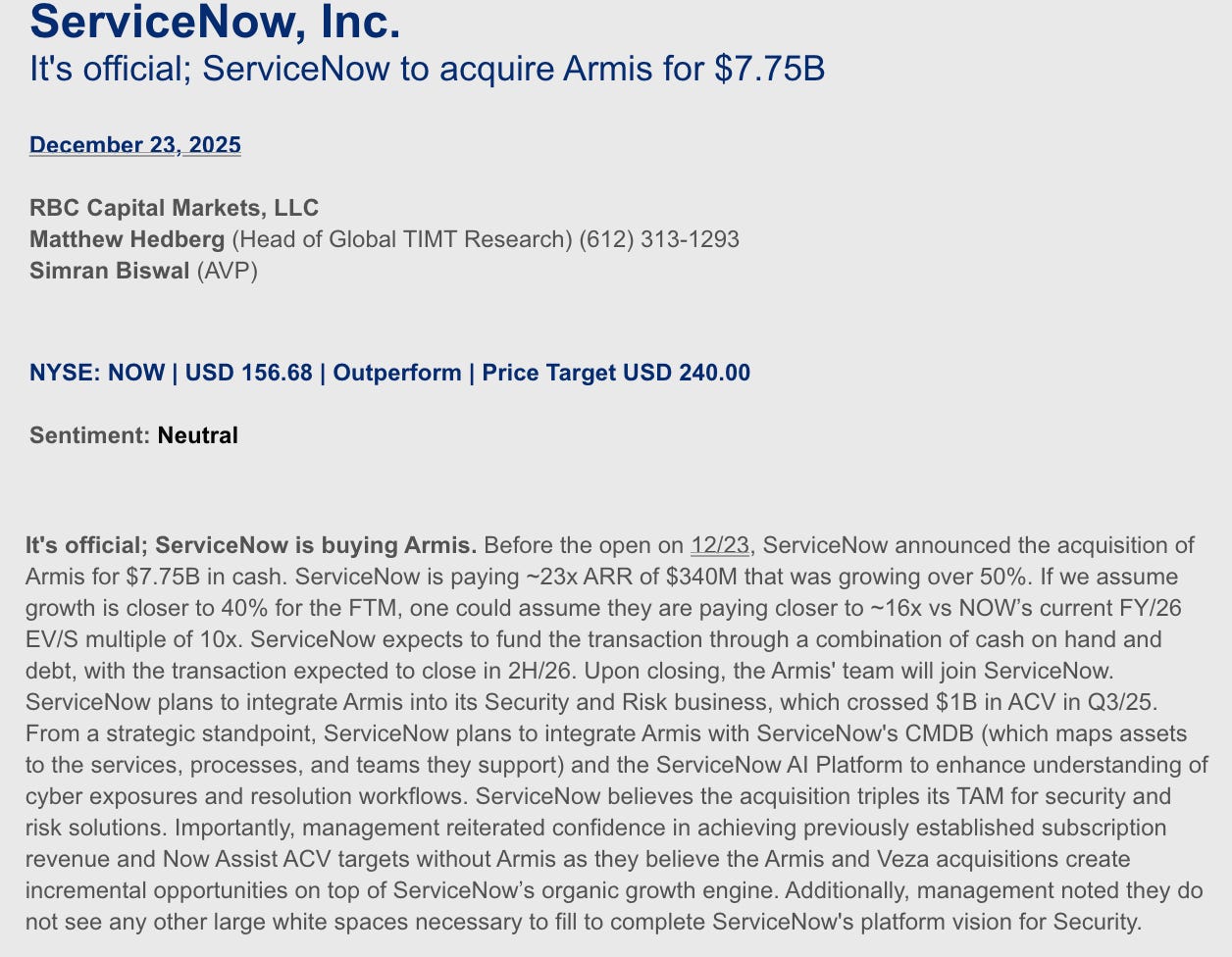

#what’s next for Israeli tech post Wiz ($32B) and Armis ($7.75B) cybersecurity startups - more startups of course! (Ctech)

Markets

#🤯 amazing outcome for everyone involved in Armis (RBC research)!

#the bullish perspective on economic growth

#👀

The "Context" space is fascinating... I think the big question is "Who," "What," and "How" will startups and existing infrastructure players capitalize on the opportunity to harvest, operationalize, and optimize Context... What does that layer look like... Does it complement, integrate, or replace existing semantic layers and metadata?

Here are a few Substacks and articles...For example, your comment:

"Context is the combination of inputs, intent, constraints, history, permissions, exceptions, and outcomes that surround every real enterprise decision."

Made me think about the Enterprise Context Management Substack; specifically, this article about a hierarchy and organization of context and how each level can be curated, why they're different, and governance for each, and organizes them into a conceptual framework: "The Enterprise Context Skyscraper": https://enterprisecontextmanagement.substack.com/p/skyscraper

The Propagation is another Substack centered around Context (specifically, Authority): "The AI Doesn't Know Who You Are": https://thepropagation.report/p/the-ai-doesnt-know-who-you-are

Then there's the Foundation Capital post about Context Graphs as the "Next Trillion Dollar Opportunity": https://foundationcapital.com/context-graphs-ais-trillion-dollar-opportunity/

Curious to learn about other key resources you /others have found!

Regarding Ivan's post, I highly recommend Power and Prediction by Agrawal, Gans and Goldfarb. They really get into the idea that like electrification (and other innovations), this is a major structural change and that we will eventually completely rearchitect how our systems are built. It will take years (decades?) to pull off. This means building from the ground up rather than stapling. We are starting to see this in a few businesses: AIFleet and Range Financial to name a couple. There will be fundamental changes to business models and margin profiles.

Regarding memory. Context is everything and I could not agree more that processes behind decision making will be the driving force. The key is introducing the right data into the process at the right time or in combination with other key data that adds information. This is defined by access and a lot of enterprise data is locked behind walls (think Salesforce). Humans make decisions by verifying data across silos and applying them to a structured process designed by humans. We have built software systems to help us organize and find that key data through databases and querying. The key is building processes for software/agents to verify data for agentic decision making processes so that they can make the decisions. Furthermore, providing access to the right data, in the right place at the right time will be key. The current system just doesn't do that and like with the introduction of electrification building "belts" to connect the power source to the function or machine just won't cut it. Electrical energy gave us new ways to build that were major improvements over mechanical energy. We need to "centralize" the data and bring everything to it. Centralization may be the key to abstraction.

Incumbent Saas vendors with large datasets (Salesforce, Workday, ServiceNow and others) will be hesitant to rearchitect systems in such a way where they lose control. Enterprise customers may come to demand access to and control of their data from their vendors when they realize it could impact financial performance. This will be a hard transformation and some will pull it off like Adobe did when they made the painful transition to Saas, but others will fail because they are stubborn.

One framework that seems to be gaining ground that could be part of the solution is BYOC. Enterprises will come to demand control of their data and who is invited to the party. I see a decoupling of control/compute/execution from the data plane- this has been happening with Iceberg and the Lakehouse where the data and compute are being decoupled. As mentioned above "centralization" is an abstraction layer and it may look something like the Lakehouse with multimodal data models and querying. Further, it may include the abstraction of the database - give AI access to the object store and the options and let the agent choose the database(s) best meant to build the functionality to make the decisions. This is already happening with Postgres. It would seem that the engines, pipelines, security, access control and verification and other infrastructure that hasn't been invented yet are key and need to catch up to storage if we are to begin to tear down the Saas silos and free the data for this new paradigm.